10 Common Hibernate Mistakes That Cripple Your Performance

Take your skills to the next level!

The Persistence Hub is the place to be for every Java developer. It gives you access to all my premium video courses, monthly Java Persistence News, monthly coding problems, and regular expert sessions.

Do you think your application could be faster if you would just solve your Hibernate problems?

Then I have good news for you!

I fixed performance problems in a lot of applications, and most of them were caused by the same set of mistakes. And it gets even better, most of them are easy to fix. So, it probably doesn’t take much to improve your application.

Here is a list of the 10 most common mistakes that cause Hibernate performance problems and how you can fix them.

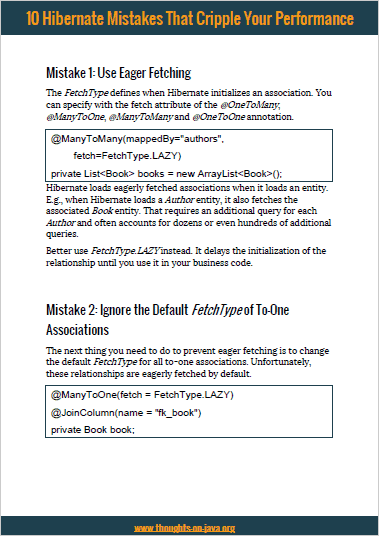

Mistake 1: Use Eager Fetching

The implications of FetchType.EAGER have been discussed for years, and there are lots of posts explaining it in great details. I wrote one of them myself. But unfortunately, it’s still one of the 2 most common reasons for performance problems.

The FetchType defines when Hibernate initializes an association. You can specify with the fetch attribute of the @OneToMany, @ManyToOne, @ManyToMany and @OneToOne annotation.

@Entity

public class Author{

@ManyToMany(mappedBy="authors", fetch=FetchType.LAZY)

private List<Book> books = new ArrayList<Book>();

...

}

Hibernate loads eagerly fetched associations when it loads an entity. E.g., when Hibernate loads a Author entity, it also fetches the associated Book entity. That requires an additional query for each Author and often accounts for dozens or even hundreds of additional queries.

This approach is very inefficient and it gets even worse when you consider that Hibernate does that whether or not you will use the association. Better use FetchType.LAZY instead. It delays the initialization of the relationship until you use it in your business code. That avoids a lot of unnecessary queries and improves the performance of your application.

Luckily, the JPA specification defines the FetchType.LAZY as the default for all to-many associations. So, you just have to make sure that you don’t change it. But unfortunately, that’s not the case for to-one relationships.

Mistake 2: Ignore the Default FetchType of To-One Associations

The next thing you need to do to prevent eager fetching is to change the default FetchType for all to-one associations. Unfortunately, these relationships are eagerly fetched by default. In some use cases, that’s not a big issue because you’re just loading one additional database record. But that quickly adds up if your loading multiple entities and each of them specify a few of these associations.

So, better make sure that all of your to-one associations set the FetchType to LAZY.

@Entity

public class Review {

@ManyToOne(fetch = FetchType.LAZY)

@JoinColumn(name = "fk_book")

private Book book;

...

}

Mistake 3: Don’t Initialize Required Associations

When you use FetchType.LAZY for all of your associations to avoid mistake 1 and 2, you will find several n+1 select issues in your code. This problem occurs when Hibernate performs 1 query to select n entities and then has to perform an additional query for each of them to initialize a lazily fetched association.

Hibernate fetches lazy relationships transparently so that this kind of problem is hard to find in your code. You’re just calling the getter method of your association and you most likely don’t expect Hibernate to perform any additional query.

List<Author> authors = em.createQuery("SELECT a FROM Author a", Author.class).getResultList();

for (Author a : authors) {

log.info(a.getFirstName() + " " + a.getLastName() + " wrote "

+ a.getBooks().size() + " books.");

}

The n+1 select issues get a lot easier to find if you use a development configuration that activates Hibernate’s statistics component and monitors the number of executed SQL statements.

15:06:48,362 INFO [org.hibernate.engine.internal.StatisticalLoggingSessionEventListener] - Session Metrics {

28925 nanoseconds spent acquiring 1 JDBC connections;

24726 nanoseconds spent releasing 1 JDBC connections;

1115946 nanoseconds spent preparing 13 JDBC statements;

8974211 nanoseconds spent executing 13 JDBC statements;

0 nanoseconds spent executing 0 JDBC batches;

0 nanoseconds spent performing 0 L2C puts;

0 nanoseconds spent performing 0 L2C hits;

0 nanoseconds spent performing 0 L2C misses;

20715894 nanoseconds spent executing 1 flushes (flushing a total of 13 entities and 13 collections);

88175 nanoseconds spent executing 1 partial-flushes (flushing a total of 0 entities and 0 collections)

}

As you can see the JPQL query and the call of the getBooks method for each of the 12 selected Author entities, caused 13 queries. That is a lot more than most developers expect when they implement such a simple code snippet.

You can easily avoid that, when you tell Hibernate to initialize the required association. There are several different ways to do that. The easiest one is to add a JOIN FETCH statement to your FROM clause.

Author a = em.createQuery( "SELECT a FROM Author a JOIN FETCH a.books WHERE a.id = 1", Author.class).getSingleResult();

Mistake 4: Select More Records Than You Need

I’m sure you’re not surprised when I tell you that selecting too many records slows down your application. But I still see this problem quite often, when I analyze an application in one of my consulting calls.

One of the reasons might be that JPQL doesn’t support the OFFSET and LIMIT keywords you use in your SQL query. It might seem like you can’t limit the number of records retrieved within a query. But you can, of course, do that. You just need to set this information on the Query interface and not in the JPQL statement.

I do that in the following code snippet. I first order the selected Author entities by their id and then tell Hibernate to retrieve the first 5 entities.

List<Author> authors = em.createQuery("SELECT a FROM Author a ORDER BY a.id ASC", Author.class)

.setMaxResults(5)

.setFirstResult(0)

.getResultList();

Mistake 5: Don’t use Bind Parameters

Bind parameters are simple placeholders in your query and provide a lot of benefits that are not performance related:

- They are extremely easy to use.

- Hibernate performs required conversions automatically.

- Hibernate escapes Strings automatically which prevents SQL injection vulnerabilities.

And they also help you to implement a high-performance application.

Most applications execute a lot of the same queries which just use a different set of parameter values in the WHERE clause. Bind parameters allow Hibernate and your database to identify and optimize these queries.

You can use named bind parameters in your JPQL statements. Each named parameter starts with a “:” followed by its name. After you’ve defined a bind parameter in your query, you need to call the setParameter method on the Query interface to set the bind parameter value.

TypedQuery<Author> q = em.createQuery(

"SELECT a FROM Author a WHERE a.id = :id", Author.class);

q.setParameter("id", 1L);

Author a = q.getSingleResult();

Mistake 6: Perform all Logic in Your Business Code

For us as Java developers, it feels natural to implement all logic in your business layer. We can use the language, libraries, and tools that we know best. (And we call that layer the business layer for a reason, right?)

But sometimes, the database is the better place to implement logic that operates on a lot of data. You can do that by calling a function in your JPQL or SQL query or with a stored procedure.

Let’s take a quick look at how you can call a function in your JPQL query. And if you want to dive deeper into this topic, you can read my posts about stored procedures.

You can use standard functions in your JPQL queries in the same way as you call them in an SQL query. You just reference the name of the function followed by an opening bracket, an optional list of parameters and a closing bracket.

Query q = em.createQuery("SELECT a, size(a.books) FROM Author a GROUP BY a.id");

List<Object[]> results = q.getResultList();

And with JPA’s function function, you can also call database-specific or custom database functions.

TypedQuery<Book> q = em.createQuery(

"SELECT b FROM Book b WHERE b.id = function('calculate', 1, 2)",

Book.class);

Book b = q.getSingleResult();

Mistake 7: Call the flush Method Without a Reason

This is another popular mistake. I’ve seen it quite often, that developers call the flush of the EntityManager after they’ve persisted a new entity or updated an existing one. That forces Hibernate to perform a dirty check on all managed entities and to create and execute SQL statements for all pending insert, update or delete operations. That slows down your application because it prevents Hibernate from using several internal optimizations.

Hibernate stores all managed entities in the persistence context and tries to delay the execution of write operations as long as possible. That allows Hibernate to combine multiple update operations on the same entity into 1 SQL UPDATE statement, to bundle multiple identical SQL statements via JDBC batching and to avoid the execution of duplicate SQL statements that return an entity which you already used in your current Session.

As a rule of thumb, you should avoid any calls of the flush method. One of the rare exceptions are JPQL bulk operations which I explain in mistake 9.

Mistake 8: Use Hibernate for Everything

Hibernate’s object-relational mapping and various performance optimizations make the implementation of most CRUD use cases very easy and efficient. That makes Hibernate a popular and good choice for a lot of projects. But that doesn’t mean that it’s a good solution for all kinds of projects.

I discussed that in great detail in one of my previous posts and videos. JPA and Hibernate provide great support for most of the standard CRUD use cases which create, read or update a few database records. For these use cases, the object relational mapping provides a huge boost to your productivity and Hibernate’s internal optimizations provide a great performance.

But that changes, when you need to perform very complex queries, implement analysis or reporting use cases or perform write operations on a huge number of records. All of these situations are not a good fit for JPA’s and Hibernate’s query capabilities and the lifecycle based entity management.

You can still use Hibernate if these use cases are just a small part of your application. But in general, you should take a look at other frameworks, like jOOQ or Querydsl, which are closer to SQL and avoid any object relational mappings.

Mistake 9: Update or Delete Huge Lists of Entities One by One

When you look at your Java code, it feels totally fine to update or remove one entity after the other. That’s the way we work with objects, right?

That might be the standard way to handle Java objects but it’s not a good approach if you need to update a huge list of database records. In SQL, you would just define an UPDATE or DELETE statement that affects multiple records at once. Databases handle these operations very efficiently.

Unfortunately, that’s not that easy with JPA and Hibernate. Each entity has its own lifecycle and if you want to update or remove multiple of them, you first need to load them from the database. Then you can perform your operations on each of the entities and Hibernate will generate the required SQL UPDATE or DELETE statement for each of them. So, instead of updating 1000 database records with just 1 statement, Hibernate will perform at least 1001 statements.

It should be obvious that it will take more time to execute 1001 statements instead of just 1. Luckily, you can do the same with JPA and Hibernate with a JPQL, native SQL or Criteria query.

But it has a few side-effects that you should be aware of. You perform the update or delete operation in your database without using your entities. That provides the better performance but it also ignores the entity lifecycle and Hibernate can’t update any caches.

I explained that in great details in How to use native queries to perform bulk updates.

To make it short, you shouldn’t use any lifecycle listeners and you need to call the flush and clear methods on your EntityManager before you execute a bulk update. The flush method will force Hibernate to write all pending changes to the database before the clear method detaches all entities from the current persistence context.

em.flush();

em.clear();

Query query = em.createQuery("UPDATE Book b SET b.price = b.price*1.1");

query.executeUpdate();

Mistake 10: Use Entities for Read-Only Operations

JPA and Hibernate support several different projections. You should make use of that if you want to optimize your application for performance. The most obvious reason is that you should only select the data that you need in your use case.

But that’s not the only reason. As I showed in a recent test, DTO projections are a lot faster than entities, even if you read the same database columns.

Using a constructor expression in your SELECT clause instead of an entity is just a small change. But in my test, the DTO projection was 40% faster than entities. And even so, the exact numbers depend on your use case, you shouldn’t pass on such an easy and efficient way to improve the performance.

Learn how to Find and Fix Hibernate Performance Problems

As you have seen, there are several small things that might slow down your application. You can easily avoid them and build a high-performance persistence layer.

And these are just a few of the things I will show you in my Hibernate Performance Tuning Online Training. You will also learn how to find performance problems before they cause trouble in production and a huge set of proven Hibernate performance tuning techniques.

I will reopen the registration for the next class very soon. This is your chance, if you no longer want to waste your time asking questions in online forums or searching for the latest performance tuning trick. Join the waiting list now so that you don’t miss the registration and to get access to exclusive pre-launch content.

Nice article! Thanks a lot for tips!!

When application and DB are on the same server everything working OK.

But when application and DB are on different servers (servers are in same subnet) application cripples (its working 5 to 10 time slower).

We are using java, hibernate, c3p0…..

Any idea what could be the problem?

Hi,

There can be lots of different reasons for that.

You should check:

Regards,

Thorben

Nice article. Refreshed important concepts in a short time.

Thanks

Hi,

Nice article.

Could you please put some insights on the below scenario on

Mistake 7: Call the flush Method Without a Reason

I have an entity which has database triggers to populate some columns.

In this case if I don’t clear entityManager , next query of that entity will not return the changed columns in the database as the cached entity will be returned by Hibernate.

Hence I am forced to use flush and clear.

public class myService {

. . . .

@Autowired

private EntityManager entityManager;

. . . .

MyEntity e = myEntityRepo.saveAndFlush(myEntity );

entityManager.clear();

return myEntityRepo.findOne(e.getId() );

}

Am I doing it right or what is the right approach in this case?

Thank you

Hi,

I explained an easier and better solution here: Hibernate Tips: Map generated values.

The @Generated annotation tells Hibernate that an attribute gets generated by the database and it automatically performs the required query.

Regards,

Thorben

Nice to know as ever.

Thanks a lot!

Thanks for reading and commenting, Paul 🙂

Thanks for good article.

I agree with everything except ‘Mistake 6: Perform all Logic in Your Business Code’…

In opposite, blurring of logic on different parts of the application infrastructure – bad practice.

Moreless hibernate it’s ORM that hide your database details.

Migrating from one database to another without any pain this is one of the biggest benefit actually… in case of Stored Procedures you need to migrate them as well and the biggest problem probably if you use in-memory db for your Itegration Tests…

And I actually silent about how to support SP code (debug, test etc…) but it’s different topic.

Hi Oleksii,

in general, that is a valid concern and the most popular argument against stored procedures.

But it assumes that architectural concerns and database portability is more important than performance. If that is really the case for your application than I agree with you: Don’t use stored procedures.

All these concerns quickly disappear when your application is too slow and performance optimization becomes more important.

Stored procedures can be a lot faster than your Java application if you need to handle huge amounts of data. So why not use the system that is designed and optimized for these kinds of tasks?

If you need the additional performance, you shouldn’t ignore stored procedures just because you want to have all your business logic in one place.

Regards,

Thorben

PS: And the argument of using an in-memory DB to run your tests starts a completely different discussion. Let me just ask 1 question: How do you check that your application works correctly if you don’t test it with the most important subsystem? 😉

Hi, Thorben.

Thanks for your response.

Indeed you can use even native sql, hibernate allows this possibility. But I would have avoided this approach to the last to be honest. It’s desperate case.

About testing and in-memory db…

Different types of tests cover different layers of the application.

– Unit tests covers specific logic of class/method (mocking of all not relevant dependencies).

– Integration tests allows you cover workflow of your application (and I preffer to use in-memory db for IT tests)

– Smoke tests – to check connections/availability of subsystem…

– Auto/robo tests – can be used with the most real envs…

Hi Oleksii,

It’s, of course, a good idea to use different types of tests for your application.

My main issue with using different database systems is that these tests don’t provide a lot of value. You need to run all of them a second time using the production database. Otherwise, you’re expecting that the auto/robo tests cover all kinds of queries that you executed against the in-memory database.

Nevertheless, this might be a good approach if you don’t have the resources or time to run the test suite against a real database.

Regards,

Thorben

Hi Thorben.

The most important here that using ORM you can switch your tests/application to ANY database and nothing should broken down, it can be your real DB vendor with special test schema or just in-memory.

But again, does it really make sense to run IT tests with real DB?

Good ORM framework must guarantee you constant result for different DBs (of course here I do not take into account using native sql or stored procedures).

Otherwise efforts should be directed to test the ORM for this specific sql dialect 🙂

Using real db in IT testing this is a big hemorrhoids, because:

1) your tests are slow

2) you need to have available all the time your DB server

3) you need to support DDL for new/modificated DB objects (if you don’t use auto generate DDL strategy)

4) you can face with deadlock issues during parallel executing during clearing/preparing table before/after a test.

Usually, I follow the following rules:

– to run unit and IT tests after any commit

– to run automation accepance tests once a day (f.e at night)

Thanks, Oleksii.

Hi Oleksii,

You should have at least 1 test stage that uses the real DB with a dataset that’s similar to the one in production. Otherwise, you can’t be sure that your application works fine in production.

If that makes your development and test setup easier, you can, of course, use a different database for IT tests. But please keep in mind, that these tests will not tell you if your code will work in production.

Regards,

Thorben

>>> To make it short, you shouldn’t use any lifecycle listeners and call the flush and clear methods on your EntityManager before you execute a bulk update.

I was confused at first look, because I interpreted that as – you shouldn’t use … and you (shouldn’t) call the flush and clear… %)

Hey Sergey,

thanks for the feedback. I changed that sentence to “To make it short, you shouldn’t use any lifecycle listeners and you need to call the flush and clear methods on your EntityManager before you execute a bulk update.”

Regards,

Thorben

Great tips!

Thanks